free download

Securing the edge: infrastructure, applications and secrets management

Many hybrid solutions have applications running across the edge and a central counterpart running in the central cloud. While these counterparts are equally important for the operational aspects of the application, the security enforcing measures differ a lot between the centralized environments and the more exposed edge.

This calls for new and higher requirements for protecting data living on edge infrastructure, and in this white paper, you’ll learn how to master your security posture at the on-site edge.

WHAT WE DO

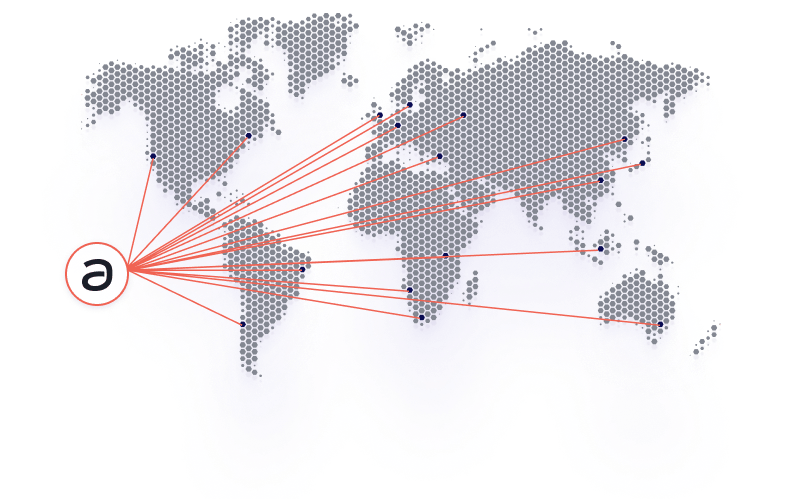

Simple, secure, and scalable container management in 10s, 100s, or 10 000s of on-site locations

If you’re planning to operate applications in many locations, tooling originally built for the cloud won’t help you achieve efficient management and operational excellence.

Avassa provide an application management and operations platform for your on-site edge. We make edge management and orchestration easy and offer unique, purpose-built functionality for distributed edge environments.

why edge?

There is no turning back.

You need to move at the speed of software.

Enterprises expect feature velocity according to expectations set by the central cloud. While you may have moved significant parts of your applications to one of more public clouds, many of your application components will run much better at the edge. This, however, should not limit your ability or speed for innovation. With Avassa, you can increase efficiency, and lower your overhead costs related to edge application management.

HOW IT WORKS

Be up and running faster than you can say edge application orchestration

Setting up new sites is a breeze when you bring the Avassa Edge Enforcer agent into play. Imagine a handy container that takes charge of all the local container runtime on your hosts. It acts like an agent, handling everything from making sure your containers play nice together to securing data.

Installing the Edge Enforcer is as straightforward as it gets, thanks to its automated and zero-trust architecture that avoids the hassle of manual and host-specific setups. Once in place, call home to their Control Tower, and receives all configurations and keys they need to keep data safe and sound, not just for the site, but for all its users.

The next step is to craft the blueprint of your containerized application in an application specification. These specifications come in the well-known forms of YAML or JSON, letting you configure them using exsiting CI/CD tooling, oris for a more hands-on approach, through the Control Tower’s web interface.

When it’s time to decide where your application should operate, you use application deployment specifications. This is a comprehensive way to tell the system where (in which sites) a specific application should operate. With a bit of simple matching language magic, you can specify where and under what conditions—think location, hardware specs, or how much resources you’ve got available—your application is placed only where it makes sense and provide value.

Once your applications are deployed, keeping an eye on their health becomes crucial, and sometimes, you need to dig into the forensics of incidents that may occur. Every container in your deployment is under constant surveillance by the platform, ensuring everything runs smoothly not just at the container level but also through optional health checks tailored to your application’s unique needs. When things go sideways, the system doesn’t just throw alerts at you; it tells you exactly which container is down which application it’s a part of, and where it’s located. This way, you’re not just getting a warning; you’re getting a roadmap to the problem.

Diving into observability. With distributed log queries, you can pull up the historical data or catch the live action from wherever your operations are spread out. You’re not drowning in data; you’re strategically filtering by time, what’s in the logs, where it happened, and more, so you’re only ever dealing with information that directly ties back to whatever challenge you’re facing right now.

WHAT MAKES US DIFFERENT?

Opinionated and user-friendly

We don’t overcomplicate things. With Avassa, it’s easy to deploy containerized applications across large-scale edge clouds in a few clicks.

Distributed security

you can trust

We built our platform on zero-trust with a layered security model across sites and tenants, and eliminated any time-consuming manual work.

Application-centric operations

Our platform was built with the needs of application operations at the forefront, freeing you from the massive complexities associated with infrastructure-centric solutions.

Our solution works well with

Recent resources

Applications first – The Rise of Container Applications in Industrial IoT

The landscape of Industrial IoT is experiencing a shift. We’re moving away from proprietary systems towards Linux and fully containerized, future-proof industrial IoT applications. Customer expectations are also evolving, with…

Most read edge computing articles of 2023

2023 has come to an end, but the knowledge and insgihts remain! Below, we have gathered some our most read articles of 2023. So be our guest, and take a…

Avassa’s 2023 Holiday Crackers and Key Takeaways

Edge computing as a generic concept can be argued to be at least as old as the cash register, but the last couple of years have seen a radical uptake…

DIVE INTO THE DETAILS

Looking for our platform documentation?

Deepdive into the details of how our solution works in our platform documentation.

SWEET TALKING

Don’t just take our word for it

LET’S KEEP IN TOUCH

Sign up for our newsletter

We’ll send you occasional emails, and you can opt-out at any time.

By clicking the button you agree to our Privacy Policy.

GET TO KNOW US

We are Avassa

Avassa empowers businesses to bridge the gap between modern containerized applications development and operations and the distributed edge infrastructure. Based in Stockholm, Sweden, Avassa was founded in 2020 and is a privately held company funded by Fairpoint Capital and Industrifonden.