What Is Data Harvesting and Why is it the Next Competitive Advantage?

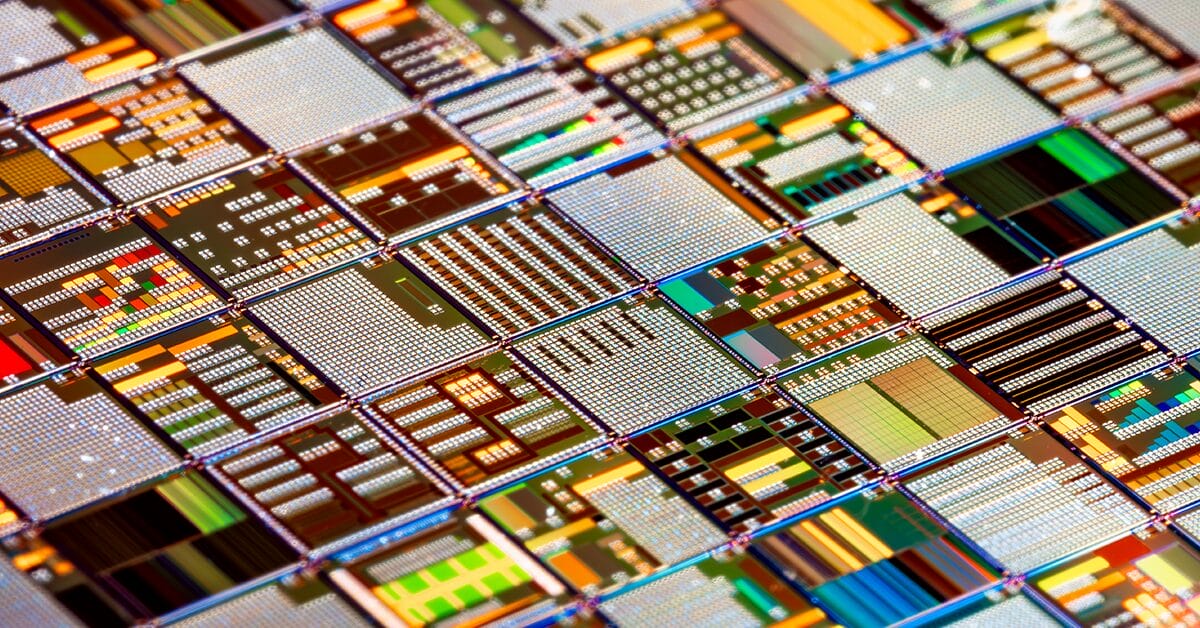

Data harvesting is the automated, large-scale collection of data from sources sensors, cameras, and IoT devices, organized so it can be turned into insight. In modern operations, the speed at which a business can harvest and act on data, especially at the edge, has become a defining factor in staying ahead of competitors.

Raw data alone is not where the value sits. The advantage belongs to companies that can collect, process, and act on information faster than the competition. That is the heart of data harvesting today. Moving from solely streaming data from sensors, data harvesting captures data right where it is generated, before it loses relevance. In this article, we look at what data harvesting is, how it works, and why the shift from cloud-only collection to edge data harvesting is shaping the next wave of operational advantage for distributed enterprises.

What Is Data Harvesting?

Before exploring the mechanics, let’s settle on a clear data harvesting definition.

Data harvesting is the automated collection of large volumes of data from a wide range of sources, organized for analysis. In edge operations, that covers a broad set of operational data streams, including:

- PLCs and other industrial controllers on production lines

- SCADA systems and historians in process industries

- Cameras and computer vision systems in stores, warehouses, vehicles, or plants

- Sensors measuring temperature, vibration, pressure, energy, presence, and flow

- Edge gateways and on-prem servers running line-of-business applications

Two characteristics define the practice. The first is automation: data harvesting relies on agents, brokers, and connectors that run continuously, not on humans pulling reports. The second is scale: a single operator may harvest data from thousands of devices across hundreds of sites.

How Data Harvesting Works (Step-by-Step Process)

Data harvesting may sound complex, but it follows a predictable sequence whether you are pulling values from a PLC tag or analyzing a camera feed in a retail store.

- Identify data sources. Define exactly where data will come from: PLC tags, sensor IDs, camera streams, or POS endpoints across the site fleet.

- Collect the data. Use the right tool for the source: OPC UA or MQTT clients for industrial controllers, REST or gRPC APIs for in-store systems, lightweight agents for sensors and gateways.

- Clean and structure the data. Filter noise, fix bad readings, normalize units and timestamps, and align the data with a model downstream systems can use.

- Analyze for insights. Apply analytics, anomaly detection, or AI models to surface patterns, defects, or trends that support real-time decisions.

Connectors, agents, and brokers do most of the heavy lifting, making the process continuous and scalable. Mature setups treat the harvest as an always-on edge data pipeline that feeds local dashboards and central AI platforms in near real time.

Common Methods and Sources of Data Collection

Data can be gathered in many ways, and the right combination depends on the asset, the protocol, and the kind of insight the business is after.

Methods of Data Collection

- Industrial protocols: OPC UA, Modbus, MQTT, and EtherNet/IP pull data directly from PLCs, drives, and DCS systems.

- APIs: structured access to line-of-business systems such as MES, WMS, ERP, and POS.

- Computer vision: cameras with on-device models extract events like queue length, shelf availability, or PPE compliance.

- Sensors and tags: purpose-built devices stream temperature, vibration, energy, or RFID readings.

Sources of Data

- Production lines, machines, and CNC tools in manufacturing plants

- Cold chain, freezers, and HVAC systems in retail stores and warehouses

- Vehicles, robots, and AGVs in distribution and logistics

- POS terminals, self-checkout, and digital signage

- Substations, pumps, turbines, and meters in energy and utilities

This is exactly why data collection at the edge matters. The most valuable signals now originate far from the cloud.

Why Data Harvesting Matters for Businesses Today

Data harvesting has moved from a back-office activity to a core part of how industrial and retail operators compete. Decisions that used to be made monthly or weekly now need to happen in seconds, and that is only possible with a steady flow of fresh data from every site.

- Explosion of operational data: plants and stores generate more sensor, camera, and machine data than ever, and most of it never reaches a cloud.

- Need for faster decisions: a stoppage on a line or a stockout on a shelf costs real money for every minute it lasts.

- AI and analytics dependency: predictive maintenance, quality inspection, and demand forecasting all depend on continuous, high-quality data from the edge.

How Data Harvesting Becomes a Competitive Advantage

The competitive value of data harvesting does not come from sitting on more data. It comes from what a steady, structured, real-time flow lets an operator actually do at every site.

- Faster decision making. Real-time signals from sensors and cameras let plant and store teams act on what is happening now, not what was reported yesterday.

- Better customer and operations understanding. Continuous data from POS, cameras, and connected equipment fuels personalization in retail and process optimization in manufacturing.

- Operational efficiency. Harvested data exposes line bottlenecks, energy waste, and inefficiencies that can be addressed at every site.

- Predictive capabilities. With enough historical and live data from PLCs and sensors, operators can forecast demand and anticipate equipment failures before they affect production or customers.

The Shift Toward Edge Data Harvesting

For years, the default was to ship every reading to a central cloud or data lake and process it there. That model is shifting for operators with distributed data collection. Cloud-only systems are slow, expensive, and prone to latency when data is generated by thousands of PLCs, sensors, and cameras across many sites. Edge data harvesting is the response. Data is collected and processed near the source, on local devices or on edge infrastructure. Only what matters is forwarded to the cloud.

The benefits show up quickly:

- Real-time decisions: insights and actions happen on site, not after a round trip.

- Lower latency: safety- and quality-critical workloads avoid long network paths.

- Reduced bandwidth usage: only relevant, aggregated data is shipped to the cloud.

- Resilience: sites keep operating even when the link to the cloud is down.

Retail is a familiar example: stores using edge data collection analyze customer behavior, queue lengths, and shelf availability in real time, then adjust staffing or layout immediately. For more, see how edge computing is transforming the retail experience. The same pattern applies in manufacturing, where vibration data from a motor can trigger a maintenance ticket on the spot.

Edge Data Collection vs Traditional Data Collection

The contrast between traditional and edge approaches is sharp once you put them side by side. Traditional models centralize everything in the cloud. Edge data collection distributes intelligence closer to the source, which changes both the economics and the speed of insight.

| Traditional Data Collection | Edge Data Collection |

| Central cloud processing | Local processing at the edge |

| High latency | Real-time processing |

| High bandwidth usage | Optimized bandwidth usage |

| Delayed insights | Instant insights |

| Site dependent on connectivity | Site keeps running if cloud is unreachable |

In practice, an edge data collection solution enables faster, more efficient decision-making than purely cloud-based methods, especially for distributed operations with many physical sites.

Best Practices for Ethical and Effective Data Collection

Strong data harvesting programs combine speed and scale with discipline. The same automation that makes the harvest powerful also makes mistakes easier to repeat across thousands of sites.

- Follow regulations: align processes with GDPR, CCPA, NIS2, and any local data protection or critical infrastructure laws.

- Pass only relevant data to the cloud: optimize what you gather to what is genuinely needed for the use case in a central data lake.

- Ensure data security end-to-end: protect data in transit and at rest as it moves between edge sites and the cloud.

- Manage edge workloads as a fleet: treat the harvest stack as applications that must be deployed, updated, and monitored consistently across every site.

The Future of Data Harvesting: Real-Time, AI, and Edge

Data harvesting is evolving fast, and the direction is clear. The future favors systems that combine continuous collection, intelligent processing, and decisions made as close to the data as possible.

- AI at the edge: harvested data feeds models running on local hardware, automating quality inspection, predictive maintenance, and local decisions.

- Real-time analytics: operators are moving from delayed reports to instant insight at the edge.

- Edge-first architectures: more data is processed close to where it is generated, with the cloud reserved for what genuinely benefits from centralization.

Data harvesting is becoming one of the clearest competitive advantages a distributed operator can build. By turning data into fast, actionable insights at the edge, which requires the right infrastructure to manage devices, applications, and pipelines at scale.

Conclusion

Data harvesting has shifted from a niche technical activity to a core driver of competitive advantage for enterprises across verticals. The companies winning today are collecting data from sensors, PLCs, cameras, and systems at every site, and turning it into action almost immediately. That is why the conversation has moved from cloud-only collection to edge data harvesting, where insights happen where the data is born. To capture the full value, businesses need a clear strategy, ethical guardrails, and infrastructure built for distributed, real-time operations across every site they run.

Frequently Asked Questions

The questions below address common points of confusion when teams begin evaluating IT/OT convergence and the role of Edge Computing.