Networking at the Edge: What it takes to make edge applications communicate securely and reliably

Edge orchestration discussions often focus on deploying containers to remote hosts — as if getting a workload to start is the main challenge. In reality, that is only the beginning.

An application running at the edge, whether on a single host or in a small cluster, depends on a range of surrounding capabilities to reliably and securely function. In previous articles, we have covered foundational services such as distributed secrets management and distributed image registries. In this article, we turn to another critical layer: networking at the edge.

What is Edge Networking?

Edge networking is not a single problem but a set of interconnected challenges and requirements:

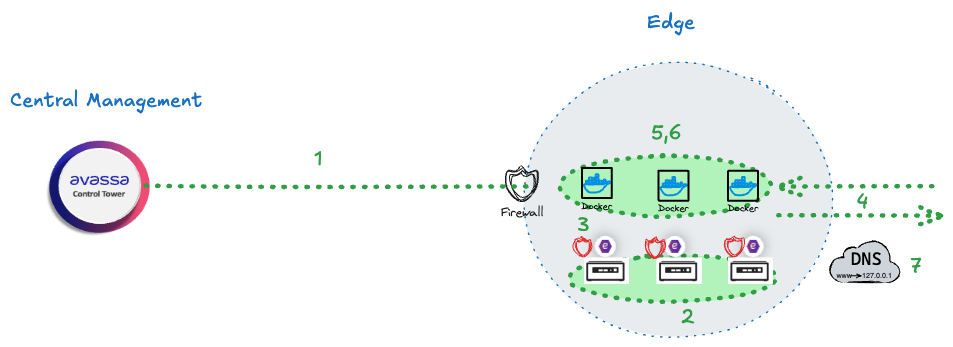

- Connectivity to the central management component. In many environments, inbound connections are not permitted, and OT networks may be segmented or isolated, requiring outbound-only communication patterns or proxy-based solutions.

- Cluster networking. When multiple edge hosts form a cluster, the cluster network must be securely established, authenticated, and correctly configured.

- Host and workload protection. Edge hosts and their applications need local firewall configuration and controlled network exposure.

- Ingress and egress networking. Edge applications may expose REST endpoints for AI inference, local web interfaces, MQTT brokers, or other services that must be reachable in a controlled manner.

- Secure application networks. Applications often consist of multiple containers, running on the same host or distributed across a cluster. These components must communicate over a secure, micro-segmented network.

- Application-to-application networking. Mechanisms are required to allow selected applications to communicate while maintaining isolation boundaries.

- DNS services. Stable DNS naming is required to support service discovery, container mobility, replication, and local client access via ingress endpoints.

In the following sections, we explore how these networking requirements differ from traditional cloud assumptions and what is required to address them in distributed edge environments.

1. Connectivity to the Central Management Component

Edge environments are often characterized by intermittent, low-bandwidth, or high-latency connectivity. In many cases, inbound connections are not permitted, and OT networks may be segmented or isolated from external systems.

Edge orchestration solutions must allow operation in environments where inbound firewall openings are not allowed and ensures the confidentiality and integrity of management traffic.The Avassa Edge Platform is designed around outbound-initiated, encrypted communication from the Edge Enforcer to the Control Tower over standard ports. No incoming ports or VPNs are needed.

Communication between the Edge Enforcer and the Control Tower is handled through a loosely coupled, asynchronous pub/sub mechanism. This model decouples edge execution from central management operations. If a site is temporarily disconnected, management actions initiated from the Control Tower do not “time out” or fail; instead, they are queued and applied once connectivity is restored. Similarly, state updates from the edge are synchronized when communication is re-established..

When connectivity is unavailable, it’s important for availability and autonomy reasons, that the edge workload continues to operate autonomously using the last known configuration, allowing applications to run without relying on continuous cloud reachability.

To further accommodate constrained links, container image distribution is optimized to minimize network load. Only the required image layers and incremental differences should be transferred to the edge, reducing bandwidth consumption and avoiding unnecessary data movement across low-bandwidth connections.

In more restricted environments, proxy-based setups can be used to bridge between segmented networks and the central management component while maintaining encrypted communication channels.

2. Cluster Networking

Many edge workloads are business-critical and require high availability. Running them on a single host is often insufficient, which means clustering must be supported even at small sites. At the same time, edge deployments may span hundreds or thousands of locations where no dedicated IT personnel are available to manually configure, troubleshoot, or maintain cluster networking.

Cluster formation at the edge must therefore be fully automated, secure by default, and resilient to constrained or segmented site networks.

Within a site, the Avassa Edge Platform automatically establishes secure host-to-host connectivity using mutual TLS (mTLS). These encrypted channels are used for control-plane communication between Edge Enforcers, including coordination and container image replication.

Application traffic is handled separately through encrypted overlay networks (described below), ensuring that workload communication remains isolated from control traffic and protected even if the underlying site network cannot be assumed trusted.

This separation of control-plane and data-plane networking, combined with automated cluster formation, enables high availability at the edge without introducing manual network configuration or operational complexity per site.

3. Protecting Edge Hosts and Applications with Local Firewall Configuration

At the edge, there is rarely a centralized perimeter firewall that can reliably enforce workload isolation. Edge hosts are often deployed in environments where local networks cannot be fully trusted, where physical access may be less controlled, and where segmentation is inconsistently implemented. Without host-level enforcement, applications risk unintended exposure or lateral movement between workloads.

To address this, the Edge Enforcer configures local networking and firewall rules for each host. By default, incoming connections from external networks are blocked unless explicitly allowed via ingress configurations; only traffic on designated protocols and ports is permitted.

Outbound access is also controlled per application. External connectivity is defined according to application policies, ensuring that workloads do not gain unrestricted access to surrounding networks. This reduces the attack surface and helps enforce least-privilege networking at the host level.

4. Enabling Ingress and Egress Networking to Edge Applications

Edge applications often need to expose services such as REST endpoints for AI inference, industrial APIs, or local web-based interfaces. At the same time, they may require controlled outbound connectivity to external systems. Both ingress and egress traffic must therefore be explicitly managed.

The Avassa Edge Platform supports controlled ingress networking through three primary models:

- IP Pools – A pool of pre-allocated IP addresses can be configured on the host network. When an application requires ingress exposure, an address from the pool is assigned and bound to the application. This provides predictable addressing and clear separation between workloads.

- DHCP-based addressing – In environments where static IP allocation is not preferred, applications can obtain addresses dynamically via DHCP, integrating with existing site network infrastructure.

- Port forwarding – Applications can expose specific ports on the host interface, forwarding traffic from designated external ports to internal container services. This is mostly used during development phases and not recommended for operational scenarios since the risk of port conflicts.

Ingress exposure is explicitly defined in the application specification. The Edge Enforcer configures the required networking and local firewall rules, ensuring that only intended protocols and ports are reachable.

On the egress side, outbound connectivity is restricted by default. Applications do not have unrestricted access to external networks. Egress access is centrally defined and remotely configured, allowing only explicitly permitted destinations and services.

Both ingress and egress policies can be fine-grained and scoped to specific subnets, enabling precise control over which network segments an application may communicate with. This supports micro-segmentation and helps enforce least-privilege networking at the edge.

5. Secure Application Networks and Micro-Segmentation

In many edge deployments, applications share the same physical network and often the same hosts. Without explicit isolation, containers can communicate freely over the local network, increasing the risk of unintended access or lateral movement between workloads. Traditional perimeter-based security models offer limited protection in these distributed and often flat network environments.

To address this, application instances are automatically connected to isolated application networks (VXLAN) across edge sites. These overlay networks are secured using encrypted tunnels (WireGuard) to ensure confidentiality and integrity of service-to-service communication.

By default, application network traffic remains isolated between applications unless explicitly configured otherwise. This micro-segmentation enforces strict communication boundaries, reducing the risk of lateral movement and ensuring that only intended services can interact across the site or cluster.

6. Application-to-Application Networking

Strict micro-segmentation improves security, but real-world systems rarely operate in complete isolation. Edge solutions often consist of multiple applications that need to exchange data, for example, an AI inference service consuming data from a local ingestion pipeline, or an MQTT broker feeding processed data into a visualization service.

The challenge is enabling this communication without collapsing isolation boundaries and reverting to flat networking.

To address this, selected applications can be explicitly connected through shared application networks. Applications that are assigned the same network identifier are placed into a common encrypted overlay, enabling secure and scoped inter-application communication.

This model preserves default isolation while allowing deliberate and well-defined communication paths. Instead of opening broad network access between workloads, connectivity is treated as an explicit design decision — aligned with least-privilege principles and controlled at the orchestration layer.

7. DNS Services

In distributed edge environments, IP addresses are not stable identifiers. Containers may restart, migrate between hosts, or scale horizontally. When applications are micro-segmented across isolated overlay networks, relying on static addressing quickly becomes brittle and operationally complex.

Without integrated service discovery, teams often resort to hard-coded IPs, manual configuration updates, or external DNS dependencies — all of which introduce fragility and increase operational risk.

To address this, Avassa includes a built-in DNS service that operates within application networks. Service instances are automatically registered under consistent and meaningful DNS names, allowing applications to communicate using logical service identifiers rather than fixed IP addresses.

This DNS functionality works natively within isolated VXLAN/WireGuard overlays, enabling reliable name resolution across hosts and within clusters while preserving segmentation boundaries. When applications scale, restart, or move, DNS records are updated automatically.

Additionally, ingress DNS records can be provided so local clients can consistently resolve externally exposed application endpoints.

By integrating DNS into the orchestration layer, service discovery becomes deterministic, automated, and aligned with the network segmentation model — rather than an afterthought layered on top.

Summary

Running containers at the edge is not the hard part. Making them communicate securely, predictably, and reliably over time and across segmented and unreliable networks is.

True edge networking requires outbound-only encrypted management channels, asynchronous synchronization, autonomous site operation, secure cluster connectivity, micro-segmented application overlays, tightly controlled ingress and egress policies, and built-in DNS for service discovery. These capabilities must be fully automated and integrated into the platform itself — not dependent on manual configuration or external components.

While complex, cloud-oriented service mesh architectures can be effective in large, centrally managed environments, they are often ill-suited for constrained edge sites where simplicity, autonomy, and predictable resource usage are essential.

Edge orchestration is therefore as much about networking architecture as it is about workload scheduling.